Within hours, people were exploring the contents. The structure looked familiar. Very familiar. It looked exactly like the skills pattern Anthropic had announced just two months earlier. OpenAI, without fanfare or announcement, had quietly adopted its competitor's approach to extending AI capabilities.

This wasn't just another feature launch. This was a validation of something more significant. When competitors independently converge on the same solution, especially this quickly, it signals that something fundamental has shifted. The AI industry had found an answer to a problem that had plagued it for years: how to extend AI capabilities without drowning in complexity.

The story of how this convergence happened, what it reveals about the current state of AI tooling, and what it means for everyone building with these systems deserves deeper examination than a simple announcement tweet can provide.

The /home/oai/skills Tweet That Revealed Everything

A Hidden Directory Waiting to Be Found

The /home/oai/skills directory wasn't new when Elias found it. It had been sitting there, accessible but undocumented, waiting for someone to look. ChatGPT's code execution environment has always been somewhat opaque. Users know they can run Python, access certain libraries, and create files. But the full filesystem structure? That remained mostly unexplored territory.

Elias's discovery was simple enough to verify. Open ChatGPT. Ask it to create a ZIP file of /home/oai/skills. Download the result. Examine the contents. What emerged was a collection of skill folders: documents, spreadsheets, and PDFs. Each folder contained a SKILL.md file with instructions, supporting Python scripts, and a structure that mirrored exactly what Anthropic had published in their skills repository.

/home/oai/skills/

├── documents/

│ ├── SKILL.md

│ └── templates/

├── spreadsheets/

│ ├── SKILL.md

│ └── scripts/

├── pdf/

│ ├── SKILL.md

│ ├── renderer.py

│ └── validation.py

└── ...The similarity wasn't coincidental convergent evolution. OpenAI had looked at Anthropic's approach and decided it was worth adopting. No press release. No blog post announcement. No marketing campaign about revolutionary new capability extension mechanisms. Just a quiet implementation of a pattern that worked.

This silent adoption speaks volumes. In an industry where every feature launch gets a full marketing treatment, choosing to adopt a competitor's pattern without fanfare suggests something different. This wasn't about claiming innovation. This was about recognizing a good solution when one emerged.

When the AI Noticed Its Own Font Rendering Bug

From Theory to Actual Functionality

Theory is one thing. Actual functionality is another. The test was straightforward: ask ChatGPT to create a PDF about something requiring both web research and careful formatting. The rimu tree breeding season for kakapo birds provided perfect subject matter.

The request went to GPT-5.2 Thinking, which immediately announced it was reading the PDF skill instructions. Then it began web searching for current information about rimu masting and kakapo breeding predictions for 2026. Eleven minutes later, a professionally formatted PDF emerged with proper typography, citations, data tables, and careful attention to detail.

⚡Self-Correcting Workflow

This wasn't preprogrammed logic checking for font support. The skill instructions included guidance about rendering PDFs to images for quality checking. The AI followed those instructions, noticed the problem visually, understood what caused it, and corrected the issue. The skill provided a framework. The AI filled in problem-solving.

The rimu breeding report was accurate, well-sourced, properly formatted, and ready for actual use. More importantly, it demonstrated that OpenAI's implementation wasn't superficial. The skills actually worked as intended, guiding AI through complex multi-step workflows with quality validation built in.

Building a Datasette Plugin in Minutes with Hidden Skills

Skills Work Beyond the Browser

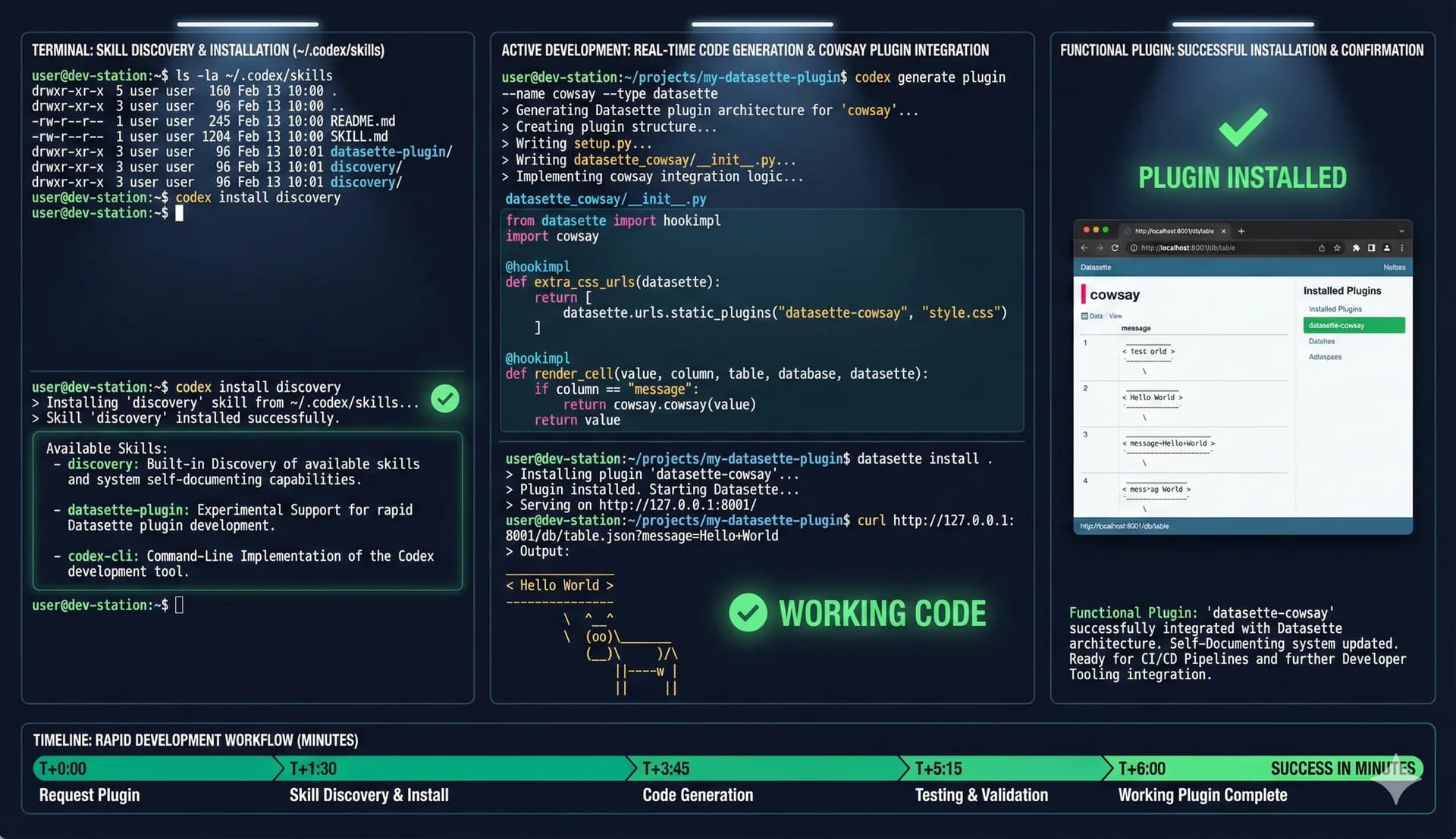

ChatGPT wasn't the only place OpenAI adopted skills. Two weeks before the /home/oai/skills discovery, their open-source Codex CLI tool gained experimental skills support. The implementation differed slightly from ChatGPT but followed the same core pattern. Any folder in ~/.codex/skills became an available skill. The SKILL.md file format remained identical.

# ~/.codex/skills/datasette-plugin/SKILL.md

---

name: datasette-plugin

description: Generate Datasette plugins with proper structure

---

## Instructions

When creating Datasette plugins:

1. Use the standard plugin architecture

2. Include proper setup.py configuration

3. Add templates for custom pages

4. Implement test fixtures

## Example Request

"Create a Datasette plugin that displays cowsay output"Testing this proved straightforward. Create a skill for generating Datasette plugins. Install it in the Codex skills directory. Start Codex with skills enabled. Request a specific plugin. Watch it work.

The request was simple: create a Datasette plugin adding a page that displays cowsay output for the provided text. Codex read the Datasette plugin skill, understood the plugin architecture, generated working code with proper structure, and produced a functional plugin ready for installation.

The whole interaction took minutes. The plugin worked on first try. More interesting was watching Codex list available skills before starting work. It showed both the custom Datasette skill and a built-in Discovery skill explaining how to find available skills. The system was self-documenting through skills themselves.

This command-line implementation revealed something important. Skills weren't just a ChatGPT web interface feature. They worked in terminal environments, local development workflows, CI/CD pipelines. The pattern scaled from browser interactions to developer tooling without modification.

Skills beyond the browser: the same pattern that works in ChatGPT works in terminal environments, local development workflows, and CI/CD pipelines without modification.

Platform-Agnostic by Accident: How Simplicity Enabled Portability

When Good Design Creates Unexpected Benefits

Here's what makes this adoption meaningful. Skills weren't designed for OpenAI's systems. They were built for Claude. Yet they worked immediately in ChatGPT and Codex CLI with minimal adaptation. This wasn't a coincidence. This was intentional simplicity paying dividends.

The skills pattern makes essentially no assumptions about the underlying AI system. A SKILL.md file is just markdown with YAML frontmatter. Supporting scripts are just scripts. Reference documentation is just documentation. Any AI system capable of reading files and executing code in an environment can use skills exactly as designed.

Skills avoid lock-in through aggressive simplicity. They're just files. Any system that can read files and follow instructions can use them. This wasn't accidental design. This was deliberate minimalism that opened doors rather than creating gates.

This portability matters enormously for ecosystem development. Developers creating skills for Claude automatically create skills that work with OpenAI tools. Skills created for OpenAI tools work with other systems. The format itself is platform-agnostic because it makes almost no platform-specific demands.

The Vibe Engineering Validation

When Experts Design and Machines Execute

Between OpenAI's adoption becoming public and Christmas, two things happened that further validated the skills pattern. Both involved creating substantial software libraries using AI agents guided by comprehensive test suites. Both demonstrated that skills enable workflows previously impossible or impractical.

The interesting part wasn't that AI wrote code. The interesting part was the engineering process. Emil hooked into comprehensive test suites from the start. He designed the core API himself. He built custom profilers and fuzzers. He threw away early implementations and started fresh with better approaches. The AI agents did the typing. Emil did the engineering.

⚡ The JustHTML Port

Ported from Python to JavaScript while watching a movie and decorating for Christmas. Two initial prompts. Minimal supervision.

This validated what people started calling vibe engineering: professional software development where coding agents handle mechanical code production while expert humans handle architecture, design, quality, and strategic decisions. The division of labor makes sense. Humans excel at judgment. Machines excel at execution. Skills enable this division by packaging expert judgment in formats machines can execute.

The key enabler? A comprehensive test suite that lets the agent verify its own work continuously. Design the test loop correctly, and agents can validate themselves.

The vibe engineering paradigm: expert humans design architecture and tests, AI agents execute implementation, continuous validation ensures quality. Skills package the expertise that makes this possible.

Token Economics: The Invisible Force Behind Adoption

Why Markets Chose Skills

When competitors independently adopt the same approach, especially this quickly, it reveals market forces at work. The skills pattern emerged because it solved real problems that complex alternatives couldn't solve efficiently.

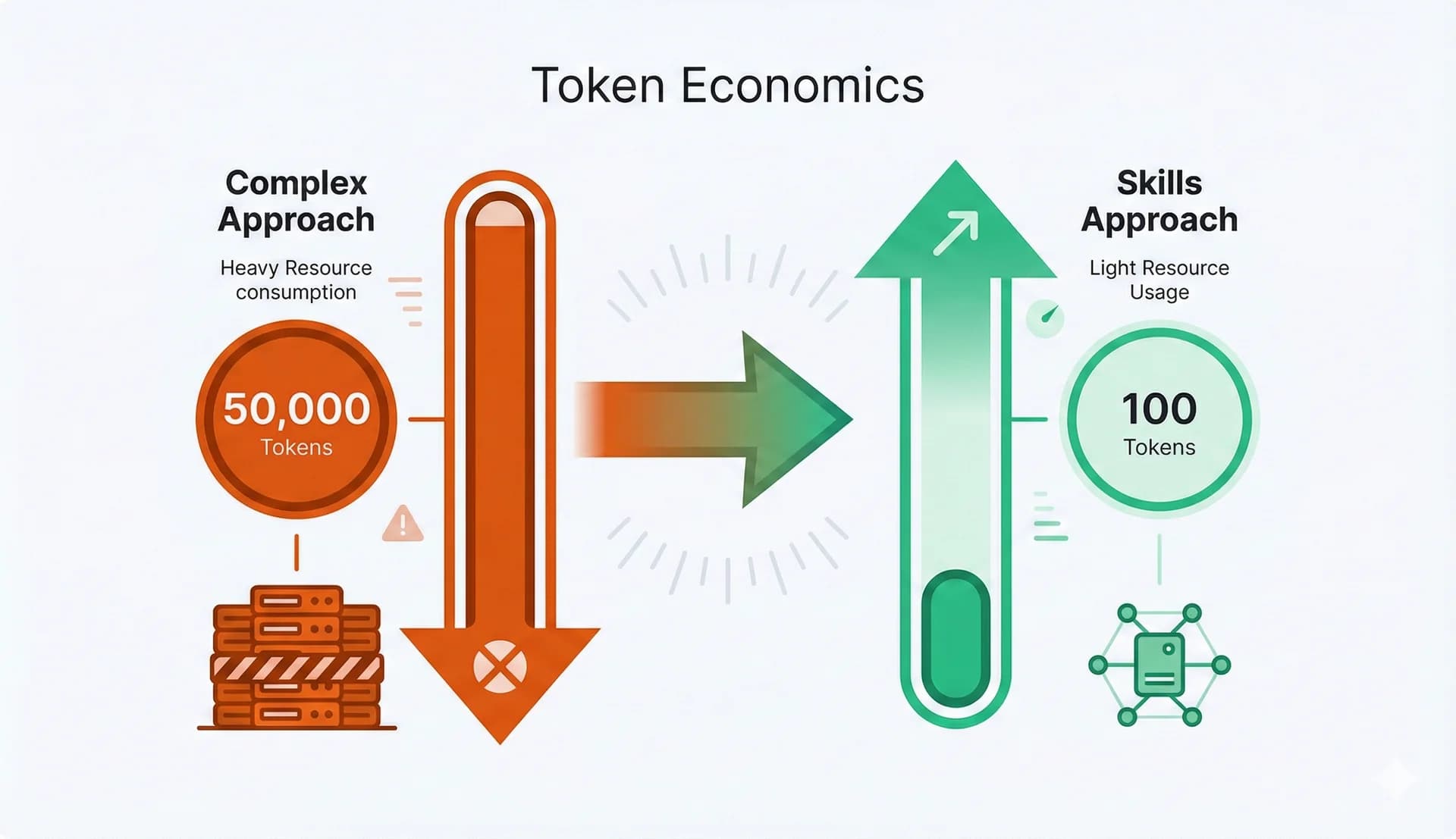

Before any actual work begins

Full content loads only when needed

The primary problem was token economics. Previous approaches consumed enormous context window space describing capabilities before any actual work could begin. GitHub's MCP implementation alone used tens of thousands of tokens. Add more capabilities and you quickly exhaust available context, leaving little room for actual tasks.

Skills solved this through progressive disclosure. Scan lightweight metadata at startup, consuming roughly 100 tokens per skill. Load full instructions only when relevant. Load supporting resources only when needed. Scale to dozens or hundreds of skills without degrading performance. The more capabilities you add, the more dramatic the efficiency advantage becomes.

The secondary problem was the creation barrier. Building ChatGPT plugins required web service implementation, authentication, API specifications, and approval processes. Creating MCP servers required protocol implementation, transport handling, and lifecycle management. Both demanded substantial technical investment before producing value.

Skills eliminated creation barriers almost entirely. Can you write markdown? You can create skills. Have scripts that work? Bundle them. Have documentation that helps? Include it. No API to implement. No protocol to learn. No framework to master. The barrier to contribution collapsed to the barrier to clear explanation.

The tertiary problem was portability. Features built for one platform stayed locked to that platform. ChatGPT plugins worked only in ChatGPT. MCP implementations required MCP-aware hosts. Moving capabilities between systems meant reimplementation from scratch. Skills achieved portability through simplicity.

The invisible force driving adoption: token efficiency through progressive disclosure, elimination of creation barriers, and portability through simplicity. Skills won because they solved real economic problems.

When Code Becomes Too Cheap to Meter

Economic Implications of AI-Powered Development

When you can port entire libraries between languages in hours with minimal effort, what happens to the economics of open source? The person who spent months carefully building JustHTML created genuine value through design decisions, architecture choices, test integration, and optimization strategies. Someone else creating a port in hours didn't contribute equivalent engineering effort.

The open source ecosystem traditionally balanced contribution and credit through effort visibility. Writing 9,000 lines of quality code took time and skill. That investment was visible and respected. When AI agents handle the mechanical production, the traditional signals break down. Judging contribution becomes harder.

The Shifting Value Proposition

The economic implications extend beyond open source. If complex software becomes this cheap to produce, what happens to software development as a profession? The coding agents don't replace senior engineers making architectural decisions. They replace the mechanical work of implementing those decisions. That's still significant displacement.

The optimistic view: coding agents free developers to focus on higher-value work. Design, architecture, user experience, strategic decisions. The pessimistic view: companies discover they need fewer developers when agents handle implementation. Reality probably lands somewhere between these extremes, varying by context.

What seems certain is that software production is undergoing rapid transformation. Skills enable and accelerate this transformation by making expertise packaging trivial. The social and economic adjustments will take years to fully play out. We're at the beginning of that process, not the end.

Stop Building Proprietary Extensions. Just Use Skills.

Clear Signals for Builders

If you're building AI-powered tools, applications, or workflows, the convergence around skills provides clear signals about which approaches have staying power.

Do create skills for your domain expertise

Whatever specialized knowledge you have, someone else needs it. Package expertise as skills and share them. The ecosystem grows through collective contribution.

Do design for progressive disclosure

Don't dump everything into context at once. Provide lightweight summaries that help systems decide when to load detailed information.

Do validate your skills with real usage

Skills that look good in theory but fail in practice don't help anyone. Test with actual scenarios. Iterate based on what works.

The skills ecosystem is young enough that early contributors have an outsized impact. The patterns established now will influence how millions of people extend AI capabilities. Participating in this early phase means shaping what comes next.

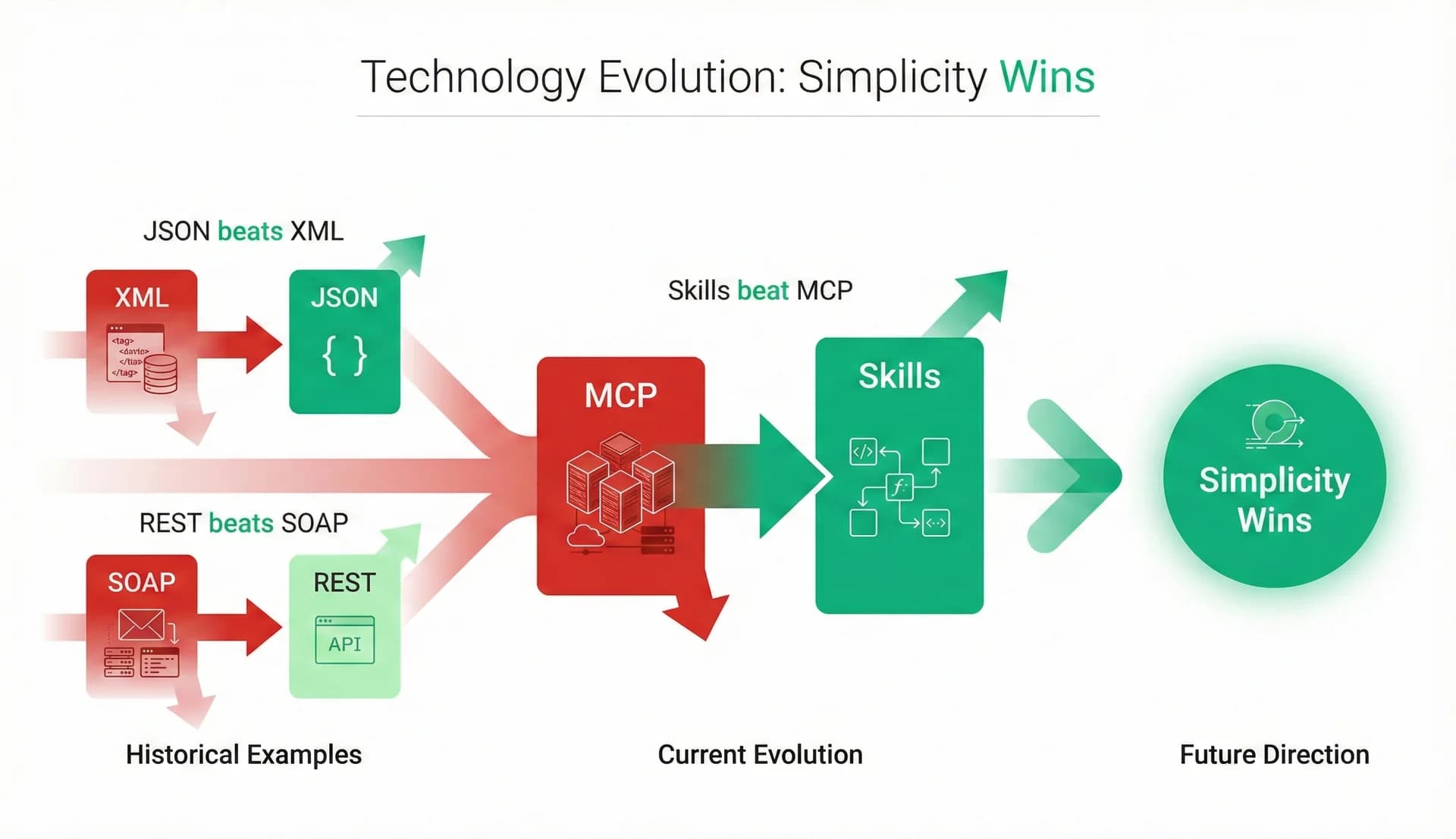

JSON beats XML. REST beats SOAP. Skills beat MCP.

The Pattern of Simplicity Winning

The skills convergence exemplifies a broader pattern in technology: simple solutions that get out of the way tend to win over time, regardless of initial enthusiasm for complex alternatives.

Model Context Protocol launched with enormous buzz. Companies rushed to announce implementations. The industry positioned MCP as the future of AI capability extension. Yet just weeks later, OpenAI adopted the simpler skills pattern instead.

History Repeats: Simplicity Wins

The pattern repeats: initial enthusiasm for comprehensive solutions that handle every edge case, followed by recognition that simpler approaches handle most cases better. Skills fit this pattern perfectly. They don't handle everything MCP does. They handle most things most people need, with far less complexity.

Skills succeed not despite their simplicity but because of it. They make the minimum assumptions necessary to work. They demand the minimum infrastructure necessary to function. They impose the minimum conceptual overhead necessary to understand. Minimums often maximize impact.

History repeats: simplicity wins. From data formats to web services to AI capabilities, the simpler solution that gets out of the way consistently beats the comprehensive framework.

The Skills Explosion: Hundreds to Thousands by Mid-2026

What Comes Next

The convergence is still early. OpenAI's adoption became public knowledge in mid-December 2025. Other AI platforms are watching. Some will adopt skills support. Others might create compatible alternatives. The ecosystem will grow, iterate, and evolve.

Predictions for 2026

Expect skill repositories to proliferate. GitHub already hosts dozens of skills. That number will grow to hundreds, then thousands. Quality will vary. Curation mechanisms will emerge. Recommended skill collections will appear. The ecosystem follows predictable open source patterns.

Expect unexpected applications. Skills enable workflows that their creators didn't anticipate. Packaging expertise in reusable, executable formats opens doors. Someone will build something surprising that wouldn't have been possible without skills. Innovation happens when tools become accessible.

The best tools fade into the background, enabling work without demanding attention. Skills are heading that direction. Not proprietary features to market. Not complex specifications to master. Just a simple pattern that works, letting people focus on what matters: the capabilities themselves, not the mechanisms for delivering them.

Looking ahead: from hundreds of skills on GitHub today to thousands by Q2, curated collections emerging, and unexpected applications we can't yet imagine. The ecosystem is just getting started.

When competitors converge, pay attention.

Markets are signaling something important.

In this case, the signal is clear: simplicity works.

Share this article

Help others discover this content.

Written by the 10X Team

Building the future of AI-powered workflows. We help teams package their expertise into skills that scale.

Explore our skills libraryReady to Transform Your Workflows?

Explore our library of AI skills and start building more efficient processes today.

Explore Skills