Model Context Protocol arrived with massive buzz, promising to standardize how AI systems access external capabilities. Companies rushed to announce MCP implementations as proof of their AI strategy. The specification was comprehensive, the architecture was sophisticated, the promise was transformative.

Then Skills arrived, and everything changed.

Skills are so simple they barely qualify as technology. A markdown file explaining how to do something. Optional supporting scripts. Maybe some reference documentation. That's it. No protocol specification. No client-server architecture. No complex transport layers. Just files in folders that AI agents can read.

The simplicity isn't a limitation. It's the entire value proposition. Skills succeed precisely because they refuse to be more complicated than necessary.

The dismissal from some corners was predictable: “this is too simple to be a real feature.” People have been dropping instruction files into directories for months. There's nothing new here. The dismissal misses the point entirely.

The Complexity Tax We've Been Paying

When Infrastructure Becomes the Bottleneck

Model Context Protocol represented the industry's best thinking about AI capability extension. The specification covered hosts, clients, servers, resources, prompts, tools, sampling, roots, and three different transport mechanisms. Companies built entire engineering teams around MCP implementation. The protocol promised standardization, interoperability, and endless extensibility.

Then reality set in. GitHub's official MCP implementation alone consumes tens of thousands of tokens before the AI can do any actual work. Add a few more MCP servers and the context window fills up with protocol overhead, leaving precious little space for the task itself. The very mechanism designed to expand AI capabilities ended up constraining them through sheer token consumption.

The problem wasn't implementation quality. The problem was fundamental architecture. Complex protocols require detailed specifications in context. Every capability needs explicit definition. Every interaction demands structured metadata. The AI needs to understand the protocol before it can use any individual capability.

Beyond token costs, complex protocols create knowledge barriers. Building an MCP server requires understanding the protocol specification, implementing transports correctly, handling lifecycle events, and managing state appropriately. Only developers willing to invest significant learning time can extend the system.

Skills take the opposite approach. Want to add a new capability? Write a Markdown file explaining how to do it. That's the entire barrier to entry.

The Outsourcing Strategy

Let the AI Do the Hard Parts

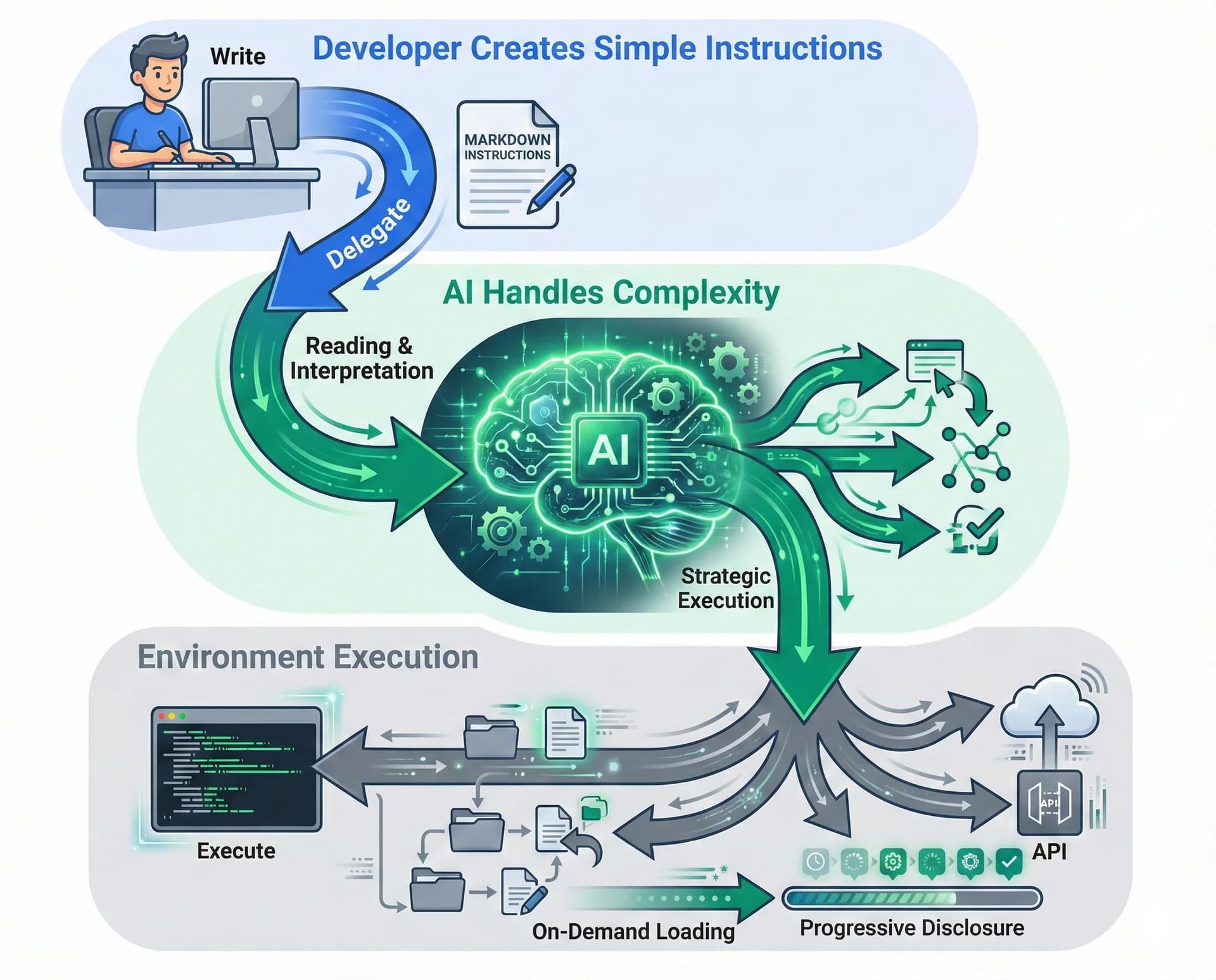

Skills work through strategic outsourcing. Instead of building elaborate capability frameworks, skills outsource the complexity to two things AI systems already excel at: reading instructions and executing code in an environment.

MCP Approach

- ✓Define capabilities explicitly in protocol format

- ✓Implement handlers for each capability

- ✓Manage state and lifecycle

- ✓Ensure specification conformance

- ✓Protocol does the heavy lifting

Skills Approach

- ✓Write markdown explaining the task

- ✓Include scripts for deterministic operations

- ✓Add reference documentation as needed

- ✓AI understands and executes

- ✓AI does the heavy lifting

This reversal of responsibility changes everything. MCP puts the burden on developers to translate capabilities into protocol-compliant structures. Skills put the burden on AI to understand plaintext instructions. Given how good modern AI has become at reading and following instructions, this is a sensible trade.

The environment does the rest. Skills depend entirely on AI having access to a filesystem, navigation tools, and command execution capabilities. This is a significant dependency, but one that's increasingly common. ChatGPT pioneered this with Code Interpreter in early 2023. The pattern extended to local machines through Cursor, various coding agents, and command-line tools.

A markdown file can instruct the AI to run scripts, read reference documentation, check validation functions, and iterate based on results—all within the execution environment. The skill provides the roadmap. The environment provides the tools. The AI navigates between them intelligently.

Token Efficiency Through Progressive Disclosure

The Economics of Context Windows

The token economics tell the story clearly. An MCP implementation might consume 30,000 to 50,000 tokens describing available capabilities before any work begins. Skills consume roughly 100 tokens per skill for frontmatter metadata. The full skill instructions load only when relevant to the task at hand.

Progressive Disclosure in Action

The efficiency compounds with scale. Adding ten MCP servers might consume 100,000 additional tokens or more. Adding ten skills consumes roughly 1,000 tokens for metadata, with full content loaded only for the skill actually used. The more capabilities you add, the more dramatic the efficiency advantage becomes.

This isn't just theoretical optimization. Token limits constrain what AI systems can accomplish. Every token spent on protocol overhead is a token unavailable for actual work. Skills minimize overhead maximally, leaving context window space for the tasks that matter.

Progressive disclosure in action: skills load only what's needed when it's needed, keeping context windows available for actual work instead of protocol overhead.

Seeing Skills Work: The Slack GIF Pattern

From Theory to Tangible Results

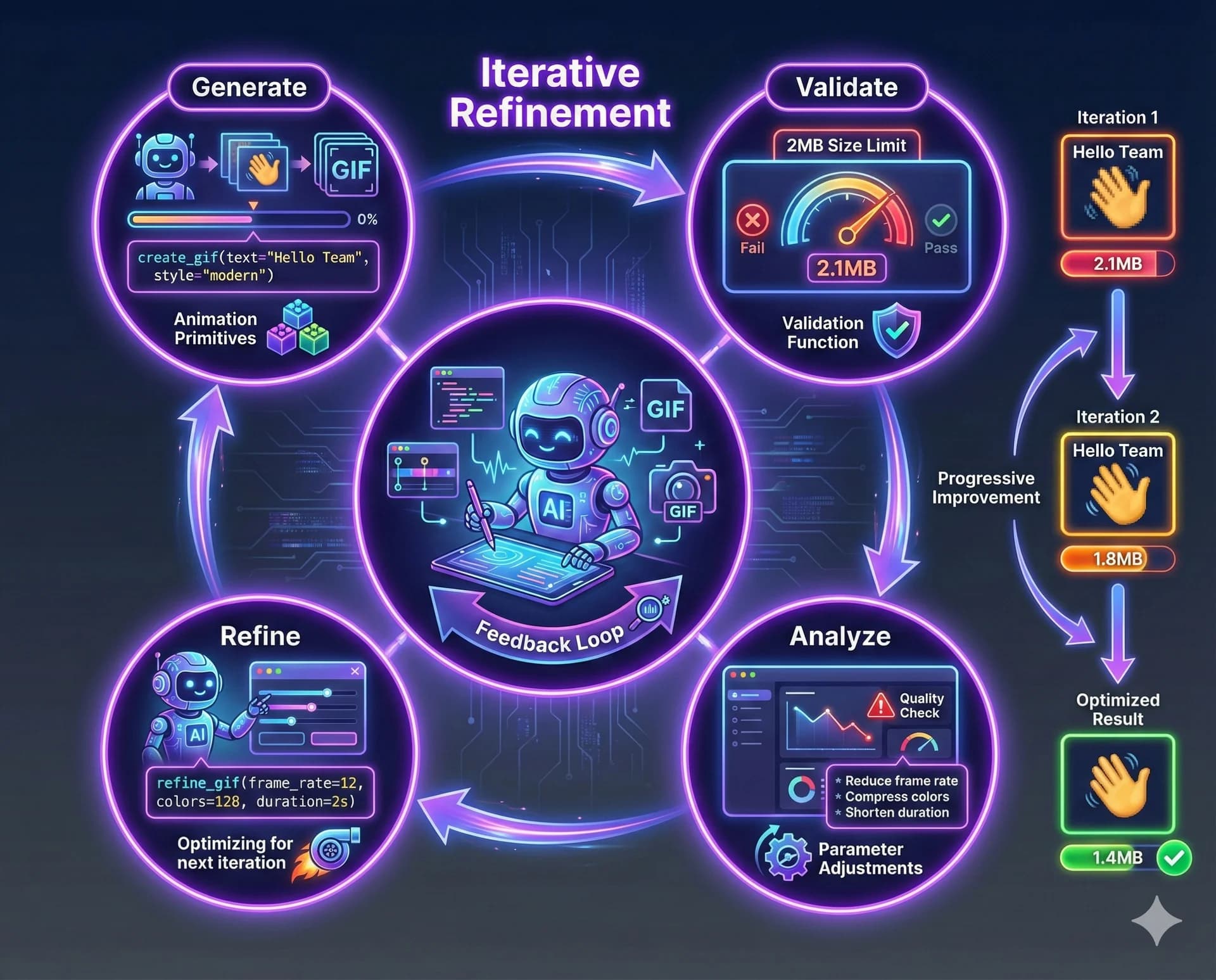

Abstract architectural discussions miss the tangible reality of what skills enable. Consider the Slack GIF creation skill Anthropic published. The skill's purpose is to create animated GIFs optimized for Slack's size constraints.

The skill includes instructions for composition, animation timing, and file size management. It bundles a GIF builder library with reusable animation primitives. Most interestingly, it includes a validation function checking whether generated GIFs meet Slack's 2MB size limit.

1. Generate GIF → skill instructions

2. Run validation → check < 2MB

3. If too large → iterate with smaller params

4. Repeat until → constraints met ✓This validation creates a feedback loop. The AI generates a GIF following skill instructions. It runs the validation function. If the file is too large, the AI iterates, making it smaller while preserving quality. The skill transformed GIF creation from a one-shot attempt to an iterative refinement process.

The skill didn't just tell the AI how to make GIFs. It gave the AI tools for making good GIFs through iteration. The workflow becomes: attempt, validate, refine, repeat until good enough.

Skills in action: the Slack GIF creation workflow demonstrates how skills package complex multi-step processes with validation loops, transforming one-shot attempts into iterative refinement.

Where MCP Fits and Why Skills Will Scale Further

Finding the Right Tool for the Job

This isn't an argument for abandoning Model Context Protocol. MCP serves important use cases, particularly for controlled enterprise environments where explicit capability definitions matter more than token efficiency. Some organizations need the structure MCP provides.

But for the broader ecosystem, skills offer overwhelming advantages:

Zero Creation Barrier

Write markdown, you're done

Trivial Sharing

Just files in repositories

No Learning Curve

If you can write, you can skill

Format Agnostic

Works with any capable AI

Most importantly, skills are format-agnostic. You can use skill files with any AI system that has filesystem access and execution capabilities. Point a different coding agent at a skills folder and it works, despite that tool having no baked-in knowledge of skills. The instructions are just plaintext. Any sufficiently capable AI can follow them.

This portability matters enormously for ecosystem development. MCP implementations are specific to MCP-aware systems. Skills work anywhere.

The Coming Explosion of Capabilities

When Everyone Can Contribute

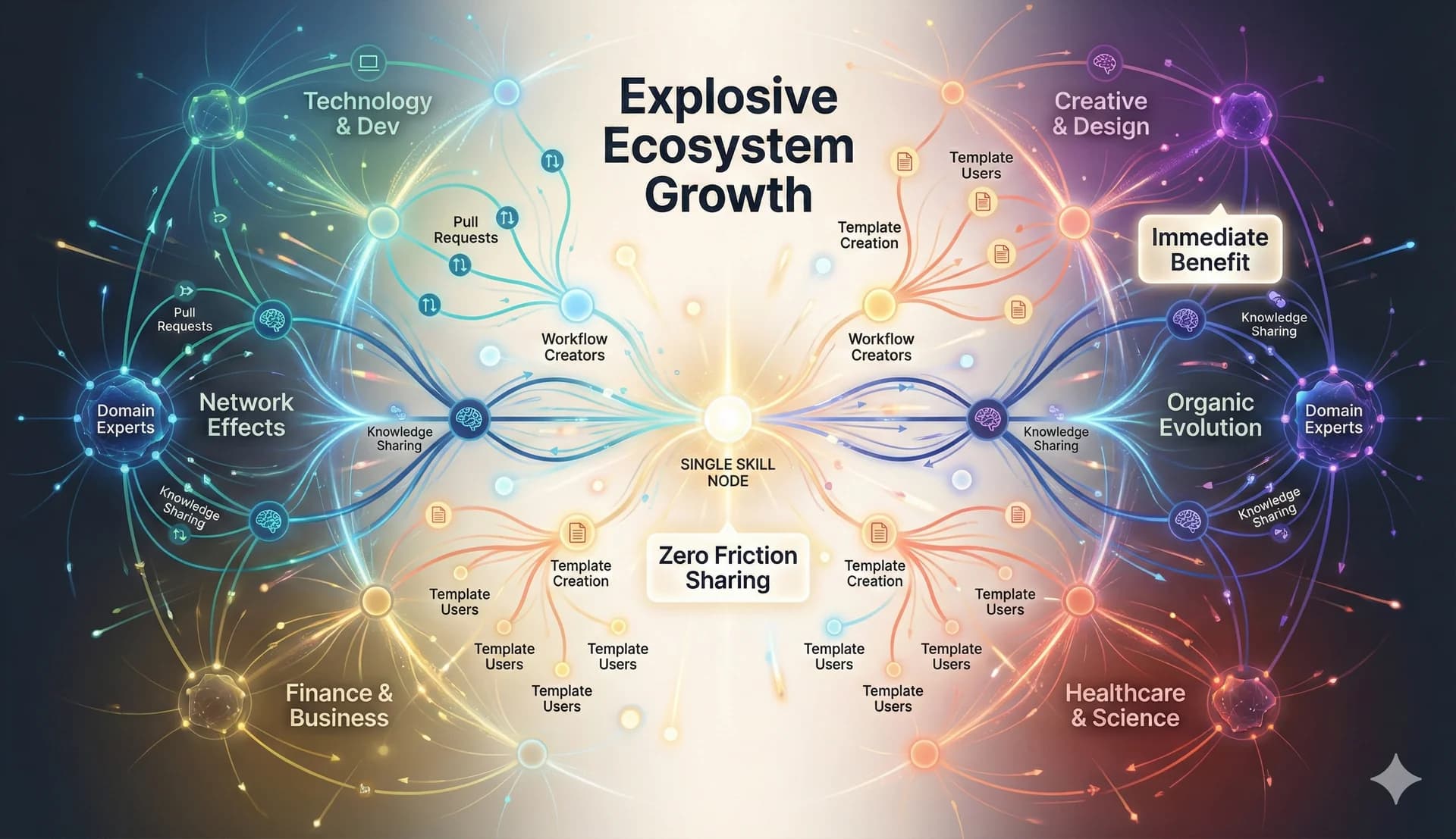

The ease of creating and sharing skills points toward explosive ecosystem growth. One markdown file can capture valuable expertise. Drop it in a repository and anyone can benefit. The friction between discovering a useful workflow and making it available to others approaches zero.

Compare this to previous capability extension mechanisms. ChatGPT plugins required building web services, implementing authentication, conforming to specifications, submitting for approval. The barrier was high enough that only companies invested the effort. Individuals rarely bothered.

MCP reduced some barriers but still required understanding protocol specifications, implementing servers, and managing transports. The learning investment remained substantial.

Skills eliminate learning investment entirely. If you've figured out a useful workflow, you can make it a skill. Write markdown explaining the steps. Include any helpful scripts. Add reference documentation if needed. Share the folder. Others benefit immediately.

This accessibility will drive creation volume far beyond what MCP achieved. Every domain expert who documents their expertise creates skills. Every workflow worth repeating becomes shareable. The library grows not just from AI companies or tool developers, but from anyone with knowledge worth encoding.

Ecosystem growth trajectories: MCP's centralized approach versus Skills' distributed, community-driven expansion. The simpler barrier to entry creates exponential adoption curves.

The Best Technology Is Technology You Don't Notice

Aligning with AI's Natural Strengths

The pushback against skills as “not really a feature” reveals deep misunderstanding about what makes technology powerful. The goal isn't complexity for its own sake. The goal is solving problems effectively. Sometimes—often—that means choosing the simplest approach that works.

Skills succeed because they align with how AI systems actually function. Modern language models are remarkably good at reading instructions and following them. They understand natural language better than any structured protocol. They can navigate filesystems, execute commands, iterate based on results. Skills leverage these strengths instead of working around them.

The design philosophy extends to ecosystem development. Instead of trying to standardize everything upfront through specifications, skills let patterns emerge naturally. Instead of requiring deep technical knowledge to contribute, skills welcome anyone who can explain things clearly. Instead of managing complexity centrally, skills distribute it across creators who each handle their specific domain.

What This Means for AI Development

A Philosophical Shift in Capability Extension

Skills represent a philosophical shift in how we think about extending AI capabilities. The industry assumed we needed elaborate frameworks, careful standardization, structured protocols. Skills suggest we actually needed to get out of the way and let AI systems do what they're already good at.

This has implications beyond capability extension. It suggests that many problems we're solving with complex engineering might have simpler solutions if we trusted AI systems more. Not every integration needs a protocol. Not every capability needs explicit structure. Sometimes, providing good instructions and letting the AI figure it out works better.

Who Can Now Contribute Capabilities

Previously, extending AI systems required software developers to build integrations. Skills let domain experts contribute directly by documenting expertise. This democratization matters for ecosystem health. The more diverse the contributor base, the more varied the capabilities, the more use cases get addressed.

The philosophical divide: rigid, centralized protocols versus distributed, adaptive simplicity. Skills democratize AI capability extension, welcoming domain experts to contribute directly.

If You Can Explain It, You Can Skill It

The Path Forward

For teams considering how to extend AI capabilities, skills offer a clear path. Start by identifying workflows you repeat frequently or expertise you wish others could access. Document these as markdown files explaining the process. Include scripts for operations that benefit from deterministic execution. Add reference documentation for deep knowledge. Test the skills with actual use cases. Iterate based on what works and what doesn't.

For individual contributors, the barrier to participation is writing clear instructions. If you've figured out a useful workflow, document it. If others might benefit from your expertise, share it. The mechanics are simpler than traditional open source contribution. No complex setup required. Just clear explanation of what works.

The coming months will reveal whether this analysis holds. Will skills truly drive the capability explosion this article predicts? Or will complexity creep in as adoption grows? The early signs point toward genuine disruption. The simplicity is proving to be a feature, not a limitation.

The power isn't in the protocol.

It's in the simplicity.

Regardless of how this plays out specifically, skills demonstrate something important about AI development. We don't always need more complexity. Sometimes we need less. Sometimes the best solution is the one that trusts the AI to understand plaintext and figure things out. Sometimes markdown files really are enough.

Share this article

Help others discover this content.

Written by the 10X Team

Building the future of AI-powered workflows. We help teams package their expertise into skills that scale.

Explore our skills libraryReady to Transform Your Workflows?

Explore our library of AI skills and start building more efficient processes today.

Explore Skills